Most people use AI the way they use Google, one search, one answer, move on. That works fine for quick questions. It falls apart when you’re trying to build something over weeks or months.

The core problem is that even with memory features switched on, what AI remembers between sessions is a compressed summary. Useful context, not full context. It knows you’re building a website about an arts collective. It doesn’t have the 264-article archive, the voice memo you recorded on Tuesday, and the content brief you drafted last month all simultaneously active and in play.

Memory is a convenience feature. A context window is a working environment, and they’re different things.

So when I’m running a complex project, I don’t rely on memory. I build a document.

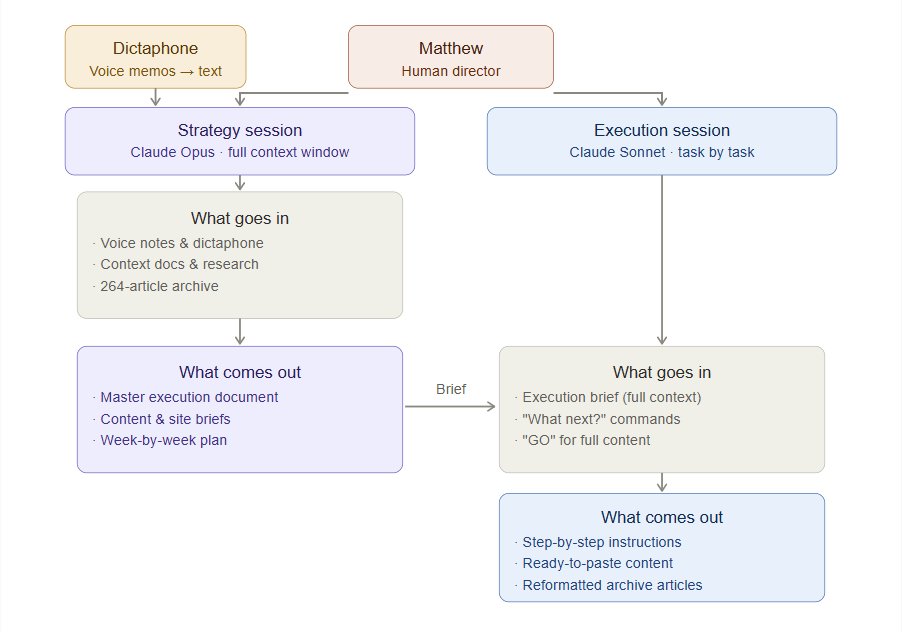

The two-chat architecture

The setup is two chats. Different models, different jobs. One holds the strategy and one executes it.

Claude Opus is the strategy brain. It gets fed everything: the full context, the constraints, the history, the source material. Voice memos I’ve recorded and transcribed, research documents, the entire body of content I’m working with. I ask it to synthesise, challenge, and plan. The output is a master document covering site architecture, content briefs, a week-by-week execution plan, operating protocols. Not a prompt but a proper reference document that captures everything the project needs.

That document then becomes the context for the second chat. Claude Sonnet reads the brief and executes against it. No planning, no big picture thinking, just the next task done correctly. “What next?” gives me step-by-step instructions. “GO” gives me complete, ready-to-use content. The brief is what allows this to work. Sonnet doesn’t need to hold the full picture in its head because the brief already does that.

The dictaphone piece matters more than it might sound. I think better out loud than I do typing, so rather than sitting down and carefully composing my thoughts into a prompt, I record a voice memo while walking around or on the train, transcribe it in seconds, and drop the raw text into the strategy chat. Opus handles the mess and half-formed ideas become structured plans. This has changed how I use AI more than anything else in the workflow. It removes the friction between thinking and doing.

Example one: building a website

This website was built entirely with this system. I fed Opus a 264-article WeChat archive, years of notes about the Spittoon Arts Collective, multiple voice memos about what I wanted to build and why, and my professional background. It produced an 824-element master execution document covering everything from the site map to the week-by-week build plan. That document has sat in the Sonnet chat ever since, and every session we work through it task by task.

The project went from idea to structured execution in a few sessions. More importantly, it stayed on track. Long projects drift. Context gets lost. Decisions get forgotten and relitigated, and a master document that travels with the execution chat means none of that happens.

Example two: choosing a primary school

Not every use of this system is a professional project. Earlier this year my daughter was approaching school age and my wife and I needed to get our heads around the local options. There were several schools within reach, each with different reputations, results, and characters. We wanted a clear picture before we started visiting.

The first layer was Perplexity AI. I used it to pull together a research report on the schools we were considering, a structured overview drawing on publicly available data, inspection reports, and local information. Perplexity is well suited to this kind of targeted research sweep: it cites its sources and produces clean summaries quickly. That report became an input.

The second layer was the dictaphone. I recorded a voice memo, informal and unstructured, talking through what actually mattered to us as a family. The things that don’t appear in Ofsted reports: distance and logistics, the kind of environment we wanted for her, our instincts about what she’d thrive in. Raw and personal.

Both went into Opus. The Perplexity report as structured context, the voice memo as human context. Opus synthesised them into a single coherent picture and Claude then produced a PDF dashboard with schools ranked against our stated priorities, key information surfaced, and questions to ask on visits flagged. Something we could actually use.

What would have been several evenings of spreadsheets and browser tabs took an afternoon. More importantly, the output reflected what we actually wanted rather than just what the data said, because the voice memo was in the mix from the start. Perplexity handles research breadth, Opus handles synthesis, and the voice memo supplies the human layer that neither of them can generate on their own. Each tool doing what it is genuinely good at.

What this system solves

Three things tend to kill AI-assisted projects. The first is context decay: long single chats degrade and the model starts losing or contradicting earlier decisions. The second is mode confusion, where trying to do planning and execution in the same conversation means neither gets done well because the focus keeps shifting. The third is the blank page problem of not knowing where to pick up after a few days away. “What next?” addresses all three because it assumes context, assumes a plan, and assumes progress.

How to set it up

Build your strategy chat first and feed it everything relevant. Ask for a master document and iterate until it captures the full picture. If you’re researching a topic rather than working from existing documents, run a Perplexity report first and drop that in alongside your own context. Record a voice memo of your priorities rather than typing them because the informality matters and it tends to surface things that careful typing edits out.

Then start your execution chat with the master document pasted in as context. Keep the two separate. If execution starts drifting into strategy, redirect it.

You don’t need Opus and Sonnet specifically, and the principle works with any two capable models. The quality gap matters though, so put your best model on strategy where the thinking is hardest. And use Perplexity or any research-first tool as a layer before strategy when you’re starting from scratch rather than from existing material. The chain of research, synthesis, and execution is what makes the system work.

Leave a comment